DRT Week 2A. From value chains to value fields

Making the Interactional Creation shift operational

In Week 1, we argued that AI interventions do not land “in a tool” or “in a workflow” in isolation; they land in a Machinic Life-Experience Ecosystem (MLXE)—the smallest living configuration where value, meaning, and risk are co-produced. We also gave that ecosystem a practical 2×2 ontology: Life territories (virtual-real: roles, identity, legitimacy), Energetic-signaletic flows (actual-real: handoffs, queues, exceptions), eXperience universes (virtual-possible: what counts as evidence and commitment), and Machinic phyla (actual-possible: interfaces, permissions, telemetry, and updateable infrastructure). Week 2 builds directly on that unit of analysis: once AI mediates interaction at scale, value stops behaving like something that “moves along a chain” and starts behaving like something that stabilizes (or drifts) across a field of interactions.

The attractive—but wrong—simplification

For decades, the managerial default has been a value-chain picture: value “moves” from upstream activities to downstream outcomes, with handoffs, margins, and accountability located along a line.

That picture is not merely old-fashioned; it becomes analytically wrong when AI intermediates perception, choice, and coordination. In a co-intelligence world, value is increasingly co-created in the interaction itself—in the live coupling of human intention, model inference, workflow routing, and governance constraints. Value is no longer well-defined by transactions or efficiency metrics alone, but is co-created through lived experience shaped in human–AI interaction flows.1

So the “wrong turn” is subtle: leaders keep using chain logic (linear sites, stable boundaries, sequential control points) while the system’s value-creating machinery has become field-like (distributed, coupled, and dynamically governed).

Why the chain fails (the analytic reason, not the slogan)

A chain model presumes that the site of value creation is legible ex ante: manufacturing, marketing, sales, service; product, channel, customer journey.

In AI-saturated settings, the site often becomes inference-time: a moment-by-moment coupling in which (i) the user’s problem is reframed, (ii) a model’s output is treated as evidence or advice, (iii) the workflow decides what happens next, and (iv) an accountability surface is implicitly assigned (“who is responsible now?”). Our forthcoming book, Co-Creating the Future with AI (Ramaswamy, Ozcan, and Narayanan, 2026), makes this shift concrete by repositioning value creation away from a stage-by-stage chain and into interactive co-creation—where experience and intelligence are produced through interaction, not merely delivered after production.

Once the value-producing mechanism is a live coupling, classic boundaries (firm vs market; producer vs consumer; upstream vs downstream) stop being reliable analytic containers. The “unit” is no longer a chain segment. It is a field of interaction.

The upgraded concept: “value field,” operationally defined

The shift in one sentence:

Value is no longer best modeled as “moving along a chain”; it emerges in fields of interaction—especially when AI intermediates perception, choice, and coordination.

Operationally, the value field depends on four co-implicated design variables—aligned with the MLXE differentiation you are using as your working grammar:

Where interaction happens (E/M surfaces): interfaces, copilots, agents, platforms, handoffs, telemetry—i.e., the operational and infrastructural surfaces where action is routed and recorded.

What counts (X surfaces): evidence, commitments, evaluation regimes, definitions of “done,” thresholds for escalation—i.e., the virtual-possible rules that shape what is legitimate before it is enforced.

Who becomes responsible (L surfaces): roles, legitimacy boundaries, identity/authority assignments, “right to decide,” “right to override,” “right to contest.”

How flows accelerate (E/M dynamics): automation speed, routing logic, model updates, tool integration—i.e., how the actual-real operations and actual-possible architectures intensify the system’s tempo.

Ontological coordinates matter here: L is virtual-real, E is actual-real, X is virtual-possible, and M is actual-possible—so we are not mixing “meaning” and “mechanism,” but tracking how each domain differentiates and co-evolves without collapsing into the others.2

If you want a compact “definition you can use in a meeting”:

Value field = the bounded set of interactions through which outcomes are co-produced, together with the obligations, roles, and interfaces that make those outcomes scalable without drift.

A minimal working model (diagram-in-words)

If a value chain is “activities → outputs → customer value,” then a value field is closer to:

(L, E, X, M) co-evolve through interaction cycles; value stabilizes when commitments (X) and legitimacy (L) keep pace with accelerated flows (E) enabled by designable mechanisms (M).

Or, in executive shorthand:

E answers: How fast are we moving?

X answers: What exactly are we committing to, and what counts as proof?

L answers: Who is authorized, accountable, and socially legible in this new arrangement?

M answers: What can we actually reconfigure—interfaces, routings, escalation rules, update loops?

Co-Creating the Future with AI (Ramaswamy, Ozcan, and Narayanan, 2026) makes the same point in a different register: competitive advantage shifts from controlling activities to orchestrating interactivities across ecosystems, with artifacts-persons-processes-interfaces (APPI) co-shaping evolution.

The instrumentation asymmetry (the practical trap)

Here is the predictable failure mode once firms accept “interaction fields” rhetorically but instrument them poorly:

Over-measured: E/M

throughput, cycle time, uptime

unit cost, token cost, latency

accuracy, resolution rates, defect counts

Under-measured: L/X

trust, meaning, role stability

obligation clarity, evidence rules

legitimacy fractures (internal and external)

This asymmetry is not a mere “soft vs hard” problem. It is a governability problem. When E accelerates and X/L do not, the organization gets faster while becoming harder to steer—because commitments and responsibility cannot travel at the same speed as automation.

Case touchpoints

OpenAI / ChatGPT: the interaction boundary is the value engine

ChatGPT’s “product” is not just a model. It is a continuously evolving interaction space where users, interfaces, feedback loops, safety constraints, and integrations co-produce outcomes—and strategic direction itself becomes ecosystemic and co-evolved.

Microsoft: Copilot alters coordination fields across functions

The moment Copilot becomes a pervasive layer across work, value realization shifts from “feature adoption” to “coordination redesign”: who drafts, who verifies, what counts as acceptable evidence, where escalation lives, and how accountability is recorded.

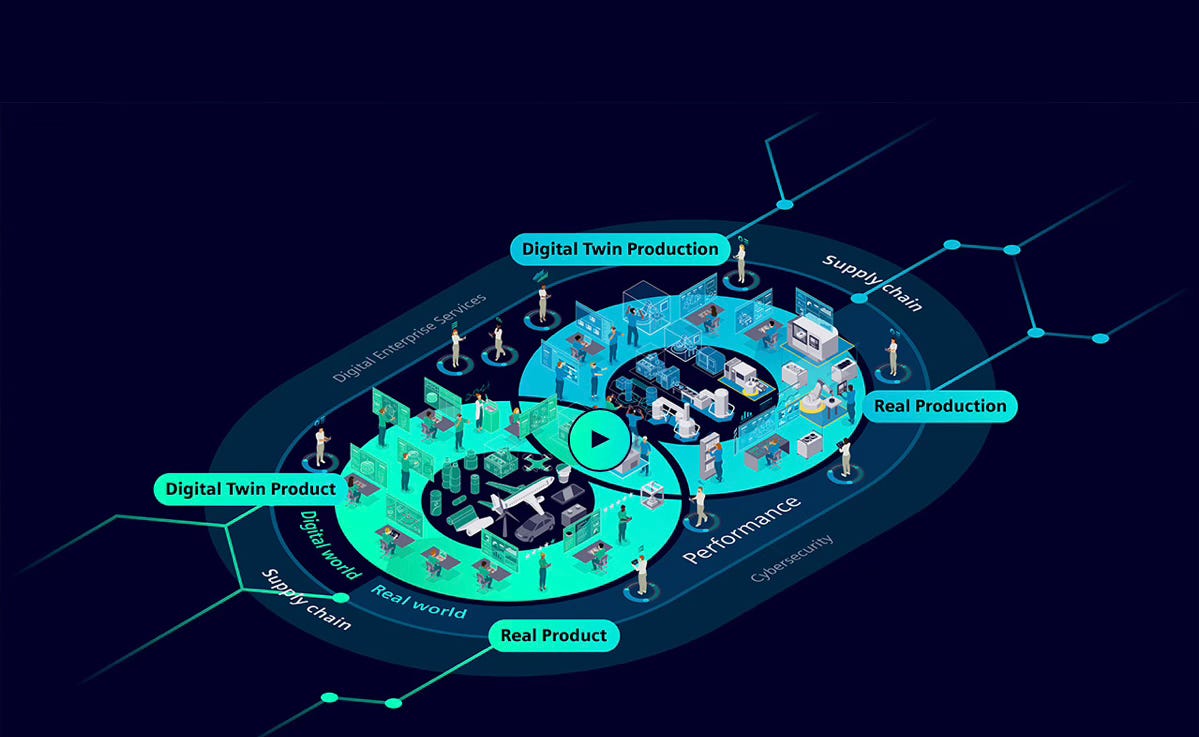

Siemens: twins and partnerships dissolve “where is value produced?”

A twin-enabled industrial stack (across partners) is a paradigmatic value field: outcomes emerge through coupled design/operate cycles, not within a single firm’s activity map. Governing complex, multi-domain systems requires accountability models embedded in workflows (not merely declared), including audit trails and access controls.

L’Oréal: advice/device/data/identity becomes a field, not a linear journey

When diagnostics, personalization, content, and routines are mediated by AI, “the customer journey” stops behaving like a line. It becomes a co-produced field where identity, interpretation, and evidence (“what counts as good for me?”) are shaped interactively.

Misuses and limits (how the concept gets mangled)

Calling every ecosystem a “field.” If interactions are not recurrent, and if commitments/roles are not being renegotiated through the system, you may still be in a chain-dominant world.

Treating the field as purely social (“culture”) or purely technical (“platform”). A value field is precisely the co-evolution of L/E/X/M.

Assuming “more autonomy” is automatically “more value.” Autonomy increases the need for explicit obligation travel and enforceable commitments.

Takeaway toolkit (a crib you can reuse internally)

Use these five diagnostics in any AI initiative review:

Boundary: Where exactly is the interaction happening—what interface, what workflow seam, what integration?

Commitment: What is the “promise” being made at inference-time, and what counts as evidence?

Obligation travel: When an action is taken (human or AI), where does the responsibility go—and where can it fall off?

Measurement symmetry: Are we measuring L/X with anything like the seriousness of E/M?

Repairability: If the field drifts, what can we change quickly—interface constraint, escalation rule, evidence type, decision right?

If you can answer those crisply, you are no longer “doing AI.” You are governing a value field.

See Creative Transformation of Organizational Ecosystems (Ozcan and Ramaswamy, 2025). Also, Co-Creating the Future with AI (Ramaswamy, Ozcan, and Narayanan, 2026).

See Chapter 3 of Creative Transformation of Organizational Ecosystems (Ozcan and Ramaswamy, 2025).